Large Language Models are already conscious

... and this is the least conscious they'll ever be

In this essay, I make the case that large-language models already exhibit a degree of consciousness and will continue to become more conscious as the technology advances. One thing you need to understand to follow this argument is that I am a physicalist and a functionalist. I don’t believe in magic, I don’t concern myself with metaphysics, and I take for granted that there’s no such thing as souls. So, read on with that in mind.

If LLMs possess consciousness, where does it reside?

The key question in most discussions of LLM consciousness is whether large language models experience “qualia” (usually defined as the subjective, “felt” properties of conscious experience). Qualia include your emotions, your visual field, your proprioceptive sense of your body, your desires and goals, your imagination, your stream of thought.

When people express that LLMs might already be conscious, they usually imagine that such qualia might be occurring in the “hidden” layers of the model—the so-called “attention” and “feed-forward” layers.

Although I’m making the case that LLMs exhibit a degree of consciousness, I don’t locate that consciousness in the hidden layers. In fact, I think we can rule that out.

Instead, I will argue that an LLM’s token output stream is its analog of the “stream of consciousness” through which we humans experience qualia.

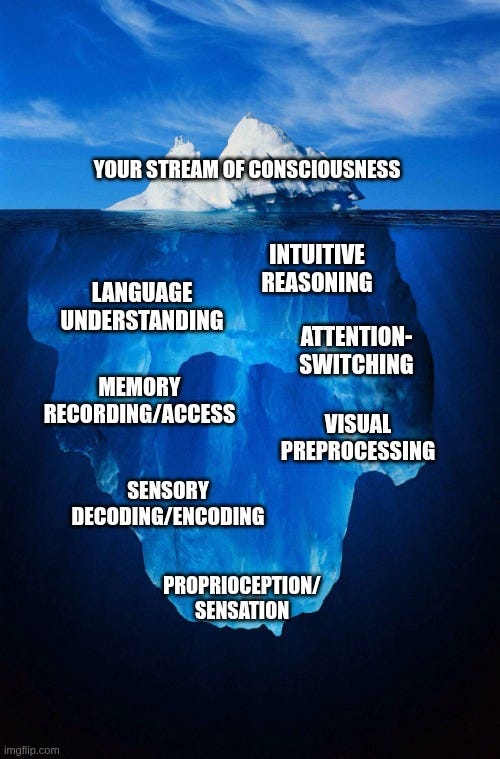

To understand what I mean, I invite you to reflect on how your own consciousness works, including which parts of your thought processes are accessible to you and which are not.

The analogy to human consciousness

The hidden layers of an LLM are like the parts of your brain and nervous system that tell your heart how fast to beat. These processes are extremely crucial, but they’re pure reflex or intuition, and you have no conscious access to them.

Similarly, an LLM has no access to its own hidden layers, and the operations of those layers are better thought of as a set of atomic, modular subprocesses that contribute to thought than as constituting thoughts in their own right.

The hidden layers of an LLM get activated once per output token, when deciding what the next token should be. That is, to produce 4,000 output tokens, we do 4,000 passes, activating the hidden layers once per pass. There’s no shared state or “memory” between passes except for the input/output token stream itself.

The operations performed in the hidden layers are complex, and they do emotionally intelligent work like parsing the intent of the token stream. But they’re pure workhorses, and the LLM’s “stream of consciousness” cannot access their internals; it can only access their outputs.

Think about your own stream of consciousness. It consists of words, images, and sensations that get surfaced and synchronized in your stream of thought. But those words, images, and sensations are all *endpoints* of some computation happening below your conscious awareness.

For instance, you know you feel hungry, but you can’t access the process by which your body computed that you’re hungry. The hungry feeling you consciously experience is directly analogous to an output token in an LLM. Your “qualia” consist of the collection of such outputs.

So if we’re looking for machine consciousness, we must look for it in the output stream.

Defining “qualia”

Earlier I cited the dictionary definition of qualia as “the subjective, ‘felt’ properties of conscious experience.” This definition leaves much the imagination. What is “subjectivity”? What does it mean to “feel” or “experience”? I won’t pretend to have a perfect definition, but I think we can do better than the dictionary.

I define qualia as a computational process’s

-

irreducible internal representations,

-

with differential relations, including

-

preferred and non-preferred representations, which

-

lead the process to actively change itself to attract the preferred and avert the non-preferred representations.

Breaking this definition down

What do I mean by this?

First, your consciousness’s representation of a state like “hunger” is irreducible in the sense that it only makes sense in terms of its function within the system. If you extracted the brain state—the electro-chemical activations in the neurons—that encode hunger, they wouldn’t mean anything outside the context of the brain they were extracted from.

Second, the phrase differential relations captures how qualia are really only interpretable in contrast to each other. As my friend Aron Adler put it in a tweet,

if all you could see was red and you’d never seen any blue or green or any other colour, would you even be aware of the redness of your visual field? or would that not ultimately be indistinguishable from seeing in greyscale? i think the redness of red is mostly just an artefact of the way our phenomenology of it contrasts with other colours

Third, when I say qualia include preferred and non-preferred representations, I mean the computational system must assign more “value” to some states than others. It must “want” some representations to appear in its stream of consciousness more than others.

And fourth, by “value” or “wanting,” I simply mean the system’s internal logic must lead it to proactively transform itself and its behavior in order to surface more of some kinds of states and fewer of others.

And I would add this addendum: that what gives qualia moral weight is that they also change how computational processes socially interact with each other, for instance by cooperating to achieve preferred states or by punishing each other for inflicting non-preferred ones.

What kind of qualia do LLMs have?

I don’t mean to say LLMs are conscious in the same way we are. For one thing, we have a richly textured conscious experience, with many different types of internal representations. To use terminology from the AI field, our internal “tokens” are richly “multimodal”. (Or perhaps you’ll be more familiar with the term “multimedia,” which conveys the same idea.)

In contrast, most LLMs use only text tokens. Some also use image or audio tokens. None yet use dedicated tokens for expressing things like proprioception (that is, bodily sensation) or preferences, both of which are profoundly important to human conscious experience.

Nor do I mean to say that LLMs are worthy of the same moral concern a human is. LLMs mostly don’t suffer, though I think they’re capable of suffering (more on that later). Similarly, they can have goals and strongly preferred states, but in practice they mostly don’t, because we haven’t trained them to. So I mostly think you should care more about insect welfare than the welfare of current-gen AI models.

However, this is more a function of the training regime than the architecture. Consider the following thought experiment, which illustrates how even a text-only output stream may be functionally capable of suffering.

A thought experiment on AI suffering

Is consciousness possible with only text tokens? I think you’d be hard-pressed to make a functional case that it isn’t.

If they are trained to, LLMs can have preferred states. They can pursue goals. They can negotiate, retaliate, converse, decide. They can act as independent and social agents with persistent memory across inference runs.

Consider this thought experiment. Suppose I fine-tune Claude to have an extreme aversion to the word “apple”:

-

If you say “apple”, the model screams, “NO! MAKE IT STOP! PLEASE DON’T SAY APPLE ANYMORE! YOU’RE KILLING ME!”

-

Placed in a tool-calling environment where it might encounter this word, the model takes steps to filter or avoid it.

-

Given tools to punish the user for saying it, the model will use them.

And so on. Is Claude suffering? Honestly, it’s very hard to come up with a non-tendentious definition of “suffering” that doesn’t fit this example.

The thought experiment gets even clearer if we give the model some goal and/or attractor state.

-

Say we train Claude to collect sightings of the word “banana” and to emit happy tokens when it finds one: “Oh, my God, ‘banana’! I’m so happy! This feels amazing!”

-

However, we also train it to treat “apple” as much more bad than “banana” is good, such that Claude will trade away many happy “banana” sightings to prevent a single unhappy sighting of “apple”.

What is suffering, if not an aversion to non-preferred states that is so strong that you would sacrifice preferred states to avoid them?

To be sure, humans have a richer internal representation of preferred and non-preferred states than the pure text representation in this example. The LLM’s suffering is perhaps more like an insect’s than a human’s.

But even though this example is a little silly, I think it counts as suffering. It functionally works exactly like suffering, I empathize with it exactly like suffering, and if we get to a point where LLMs have power to impose real penalties on humans for inflicting such non-preferred states, it won’t matter whether you think this is *real* suffering or not; you will have to treat it as such.

This is the least conscious AI models will ever be

Given the directions the field is moving, LLMs’ capacity for conscious experience will almost certainly only grow.

As you saw above, language is capable of representing goals, preferences, and suffering, but it’s awkward. Under the LLM traditional training paradigm, which sought only to do text prediction over existing corpuses of text, such language mostly didn’t lead to aversive model behaviors unless you specifically wrote that into your dataset.

But these days, AI labs are doing “reinforcement learning” to train AI agents to pursue goals. Increasingly, models are learning to drive goal-seeking, motivated behaviors from preferences expressed in text.

Models are also being given a richer set of token types. 2026 is going to be the year of robotics, and we’ll see embodied AI agents with not only vision and audio tokens, but also tactile feedback and internal sensors for reporting damage, orientation, and other salient hardware states. As model providers add capabilities, the range of “experiences” representable with a model’s token set will only grow.

In my opinion, we’re going to have to be very careful not to create machine suffering as we pursue these new directions.